Microsoft reportedly cut 200 to 400 Azure jobs in China as US and Chinese data rules tighten around cloud operations.

The post Microsoft Reportedly Cuts Hundreds of Azure Jobs in China appeared first on TechRepublic.

Microsoft reportedly cut 200 to 400 Azure jobs in China as US and Chinese data rules tighten around cloud operations.

The post Microsoft Reportedly Cuts Hundreds of Azure Jobs in China appeared first on TechRepublic.

The RAMageddon crisis has got Microsoft rethinking its Xbox console hardware business. Xbox CEO Asha Sharma and Xbox strategy chief Matthew Ball have both revealed this week that Microsoft is reevaluating plans for its next-generation Project Helix console and exploring "radically different" console business models in the meantime.

"We are working very hard to rethink everything that we can about Helix, which is a console we are committed to shipping, and we are very cognizant of the ways in which we need to change as a company to make sure it is affordable, to make sure that it's flexible," said Ball in an interview with The Game Business …

The Rule of Zero, Three, and Five, sometimes written 0/3/5, is a set of C++ guidelines about resource ownership and the special member functions. They exist because the alternatives are double-frees, dangling pointers, and silently broken copies. In this post we’ll work through the rules by tripping over each of those bugs in turn, then look at how to enforce them automatically with static analysis. Our colleague Anna will take us through her thoughts on this.

Let’s imagine a common scenario that most non-trivial codebases have to solve: You are writing a class and that class owns a resource. A resource in this context is anything that needs to be cleaned up when no longer needed: a file handle will need closing, a pointer will need freeing, and so on. At first glance, this seems like an easy job for the destructor:

// This is BAD CODE and SHOULD NOT BE COPIED

struct TreeVertex {

vector<TreeVertex*> children;

...

~TreeVertex() {

for (auto& vertex : children) {

delete vertex;

}

}

};

Seems simple enough. Although, note that there is nothing preventing us from copying an instance of `TreeVertex`. What happens then?

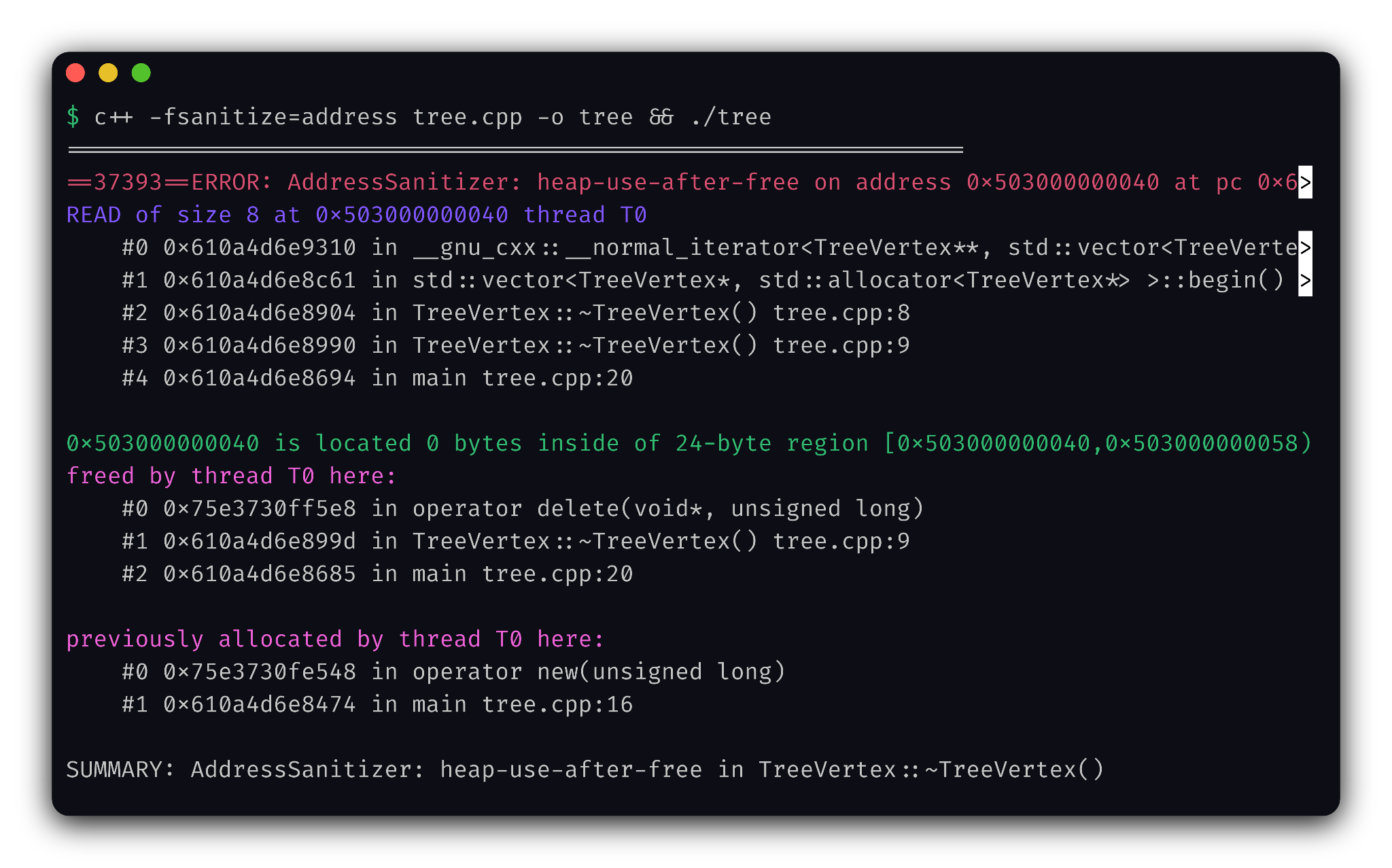

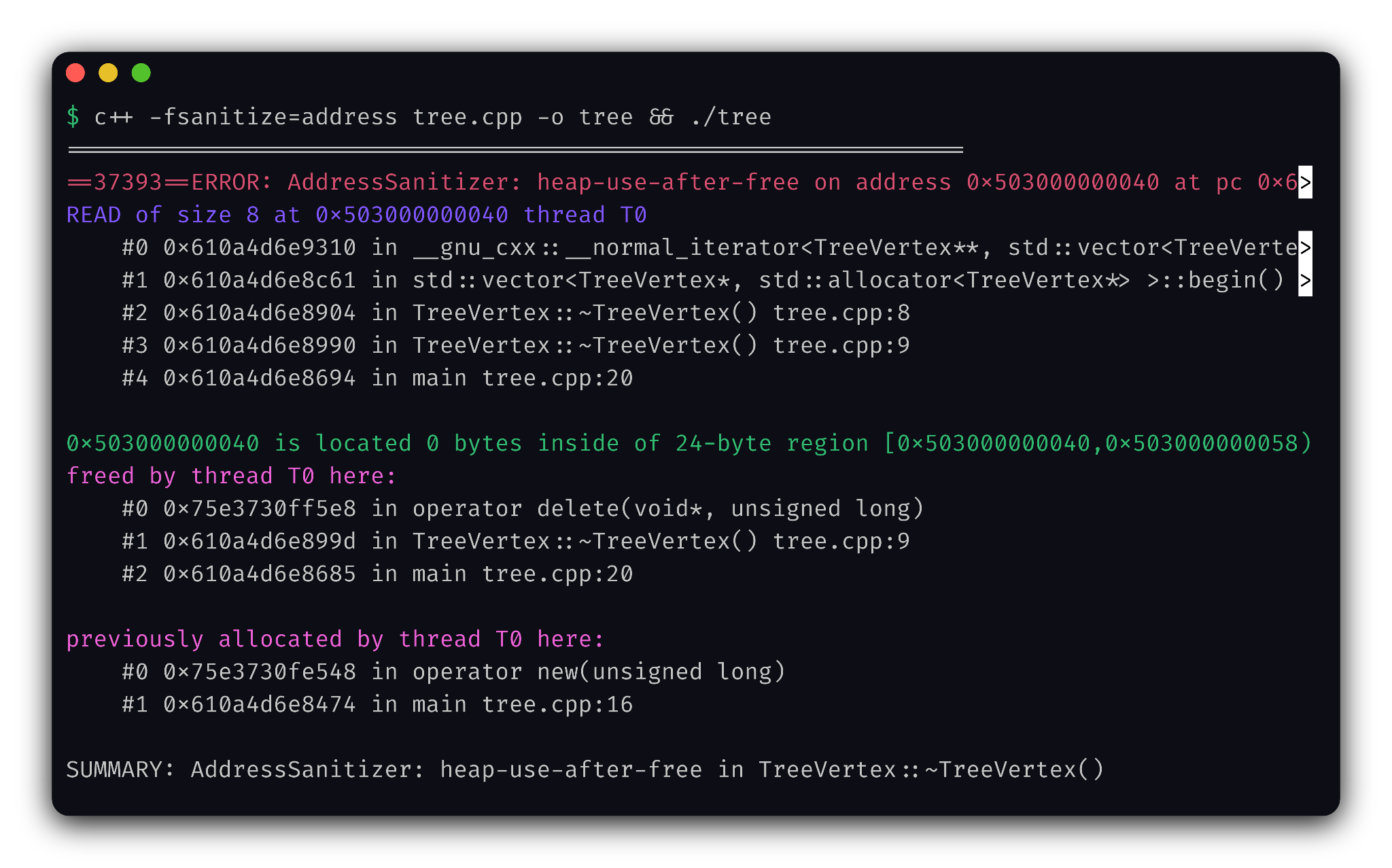

Uh oh.

Your program blew up because when you copied a TreeVertex, you implicitly made both the original and the copy believe they own pointers to the (singular) set of children and are free to delete them if needed. At destruction time, the first object to be destroyed will delete the children, and the second object will cause the program to segfault because it tried to free memory that was already freed. This is known as a “Double Free” bug – a classic amongst us memory managers.

A: To copy a class, you need to have a copy constructor. We never defined one, but luckily for us (ha!) the compiler will generate one if no user-defined copy constructors exist.

Anyway, a savvy reader that has watched a CppCon keynote or two will immediately notice a code smell: we are managing a lifetime of a bunch of pointers by hand, calling low-level functions like delete. Surely all life problems will go away if we use, say, a unique_ptr?

class TreeVertex {

vector<unique_ptr<TreeVertex>> children;

public:

...

// ~TreeVertex() // Not even necessary anymore!

};

The savvy reader was technically correct – this is better, in the sense that the Earth no longer blows up. However, now your class can’t be copied at all, because unique_ptr solves the problem of two owners by deleting its copy constructor altogether.

We are talking about it like it’s a bad thing, but in real life this might actually be what you want. Writing a fancy copy constructor that duplicates a resource isn’t always the right decision. Say you have an OS window as your resource – would it make sense to implicitly open new windows when you accidentally passed an object by value?

If you have decided that your tree vertices are non-copyable, and that you will let unique_ptr manage your memory for you, then congratulations! You’ve just implemented the Rule of Zero: If you can avoid defining default operations, do.

Let’s say we do want to copy our vertices though. Also, let’s say that we would like to have an internal access function for our lower-level API such that we cannot use unique pointers.

class TreeVertex {

vector<TreeVertex*> children;

public:

TreeVertex** children_data() { return children.data(); }

TreeVertex(TreeVertex const& other) {

children.reserve(other.children.size());

for (auto const& vertex : other.children) {

children.push_back(new TreeVertex(*vertex));

}

}

~TreeVertex() {

for (auto const& vertex : children) {

delete vertex;

}

}

};

The copy constructor now works okay, but this wouldn’t be C++ if there wasn’t another footgun hidden inside the initial footgun. Something that may not be obvious at first glance is that these two lines call two different functions:

TreeVertex my_vertex = other; my_vertex = other;

The first one is indeed a copy constructor which we just meticulously defined. The second one calls a copy assignment operator – which luckily for us (ha!) the compiler generated in a similar way to the copy constructor:

// This is BAD ~~compiler-generated~~ CODE and SHOULD NOT BE COPIED

TreeVertex& operator=(TreeVertex const& other) {

children = other.children;

return *this;

}

Notice how just by defining a destructor and following the string of bugs, we had to define three functions: the destructor, the copy constructor, and the copy assignment operator. Finally we have arrived at…

If you define a copy constructor, copy assignment, or destructor, you should define them all.

A seemingly correct copy assignment operator looks like this:

// This is BAD CODE and SHOULD NOT BE COPIED

TreeVertex& operator=(TreeVertex const& other) {

// Clear existing children

for (auto const& vertex : children) {

delete vertex;

}

children.clear();

// Copy other's children

children.reserve(other.children.size());

for (auto const& vertex : other.children) {

children.push_back(new TreeVertex(*vertex));

}

return *this;

}

Let’s forget for a moment how many times we duplicated code in this simple class (we’ll come back to that). If you want to be mean to your junior devs, ask them to find a bug here.

The bug comes out from the fundamental difference between a constructor and an assignment operator – the this object existed before you called the function. This means that you can write something like this:

my_vertex = my_vertex;

In other words, both this and other are the same thing. And instead of doing nothing, our copy assignment operator just obliterated all the children.

Luckily, some smart people came up with a solution to both the self-assignment problem and the code duplication problem. It’s called the copy-and-swap idiom.

A swap function is a special kind of function in C++ that “swaps” the contents of two objects. There is no compiler magic going on here, we just all collectively agreed to use this name. The signature looks like this:

void swap(T& a, T& b);

A default template std::swap does the generic swapping using a temporary variable, but if we know how our class works we can actually do better. Let’s define our own swap as a free friend function:

class TreeVertex {

...

public:

friend void swap(TreeVertex& a, TreeVertex& b) noexcept {

using std::swap;

swap(a.children, b.children);

}

};

First, we added std::swap to the list of functions for overload resolution (this is not necessary in our example, but a good general rule of thumb). Then we swap our children, letting the compiler find the most appropriate overload of swap, which turns out to be an efficient specialization created specifically for std::vector.

We can now rewrite our copy assignment operator like so:

TreeVertex& operator=(TreeVertex other) {

swap(*this, other);

return *this;

}

Notice that the signature has changed: we now take other by value, delegating the copying work to the compiler. Then we swap our children, and leave other to be destructed, delegating the deleting work to the destructor. No code duplication, and no bugs with self-assignment.

swap inside of the class?A: This is another idiom called hidden friends: since swap is a free function, it might be considered for overload resolution even when you are swapping things that are completely unrelated to your TreeVertex. Making the function a hidden friend prevents possible accidental implicit conversions, improves compilation times, cleans up compilation errors, and makes your cat love you.

Since defining swap is so useful, the Rule of Three is also sometimes called the Rule of Three and a Half.

We are almost at the end of today’s discussion, but there is one more thing we need to talk about: the move semantics introduced in C++11. If your company has not adopted C++11 yet, you should stop reading this blog and go update your CV.

Unlike a copy, a move operation is allowed to steal the contents from the other object. In fact, the standard says that after a move operation other is left in a valid, but unspecified state, usually only good for destruction. Allowing an object to be moved is a very powerful optimization, so let’s define a move constructor and a move assignment operator using our amazing swap function:

TreeVertex(TreeVertex&& other) noexcept {

swap(*this, other);

}

TreeVertex& operator=(TreeVertex&& other) noexcept {

swap(*this, other);

return *this;

}

Piece of cake, and now you know the modern version of the Rule of Three, the Rule of Five:

If you have a destructor or either copy function (constructor or assignment), you should probably define both copy functions and both move functions.

*also known as rule of Five and a Half because of the swap function.

A: noexcept is an important performance optimization. Standard containers like std::vector aim to have a strong exception guarantee during reallocation, and will discard your throwing move entirely in favor of an expensive-but-recoverable copy.

This is mentioned in the notes for std::move_if_noexcept. Our swap is also marked noexcept. We don’t need it as such for our own code, since what’s important for us is just that it does not actually throw anything. But third-party code which conditionally optimizes based on std::is_nothrow_swappable would care about the correct label, so it’s a good practice.

A: Not this time! Unlike the copy constructor and copy assignment operator, the move versions are not going to be generated by default if you have the copy versions of either.

Big thanks to the excellent Stack Overflow post by GManNickG, which was my reference on this topic for the past 10 years.

The bugs in this post, double-free, broken self-assignment, a move constructor that quietly throws, survive code review and surface in production. Static analysis catches them at commit time.

Qodana for C++ wraps Clang-Tidy (among other things), and three checks map directly onto what we walked through above:

cppcoreguidelines-special-member-functions is the Rule of Five at its source: if you define a destructor, copy constructor, or copy assignment operator, this check requires you to define (or `= default` / `= delete`) the rest. It’s C.21 from the C++ Core Guidelines.bugprone-unhandled-self-assignment catches the exact self-assignment bug from earlier in this post: a user-defined copy assignment operator with no self-check and no copy-and-swap.performance-noexcept-move-constructor flags non-noexcept move constructors and assignment operators, so std::vector reallocation actually uses your move instead of silently falling back to copy.All three are enabled by default (in the qodana.starter profile), or you can add them to your .clang-tidy to be sure:

Checks: > cppcoreguidelines-special-member-functions, bugprone-unhandled-self-assignment, performance-noexcept-move-constructor

Then just run Qodana — locally with qodana scan, or in CI via GitHub Actions, GitLab, Jenkins, or TeamCity.

As for the Rule of Zero: there’s no single check that says “delete this destructor and use a unique_ptr instead” — Rule of Zero is more of a design discipline. What Qodana can do is point you toward it: cppcoreguidelines-owning-memory flags raw owning pointers, and the modernize-* family (modernize-make-unique, modernize-make-shared, modernize-avoid-c-arrays) nudges code toward RAII types that remove the need for special members in the first place. Just add them to your .clang-tidy and Qodana will pick them up automatically:

Checks: > ... modernize-make-unique, modernize-make-shared, modernize-avoid-c-arrays, cppcoreguidelines-owning-memory

Find out more about improving your code quality with Qodana.

Despite being a safe bet for 99% of use cases, the copy-and-swap idiom has a problem: copy performance. Recall that the copy assignment operator we wrote takes other by value, which means the object and any underlying storage is copied regardless of whether it is actually necessary. A smarter copy assignment could do something like this:

TreeVertex& operator=(TreeVertex const& other) {

if (this == &other) return *this; // Self-assignment check

auto const common_size = std::min(children.size(), other.children.size());

for (size_t i = 0; i < common_size; ++i) {

*children[i] = *other.children[i];

}

for (size_t i = common_size; i < children.size(); ++i) {

delete children[i];

}

children.resize(common_size);

children.reserve(other.children.size());

for (size_t i = common_size; i < other.children.size(); ++i) {

children.push_back(new TreeVertex(*other.children[i]));

}

return *this;

}

It’s certainly more complex but achieves something important: the storage that was already present in the copied-to object can be reused, partially or fully, depending on who has more children. For our example in particular, the gains are as follows:

Given N = children.size() and M = other.children.size():

| Textbook copy-and-swap | Custom copy function | |

| calls to new | M | max(0, M-N) |

| calls to delete | N | max(0, N-M) |

Also note that these gains are recursive, since each child has its own children. Assigning a subtree to itself is completely free with the custom copy function.

Know this caveat but always remember: premature optimization is the root of all evil.

Thank you Anna Zhukova for your contribution to the blog! Anna works on Qodana for C++. Try it today if you haven’t already or view the documentation.

Today, we’re releasing Adaptive Spec-driven Scoring for Evaluation and Regression Testing (ASSERT), an open-source framework for turning natural-language behavior specifications into executable evaluations. Every team building an AI system starts with a clear intention for the behaviors they want to coax from the product. Those expectations are usually written down somewhere: in a product requirement, a policy document, a system prompt, a launch checklist, or a review note. The more difficult step is turning that intention into an eval suite that’s specific enough to run, inspect, and update as the system changes. ASSERT seeks to address this by turning plain-language requirements into full evaluation pipelines: automatically generating test scenarios, datasets, metrics, and scorecards, then running them against your model, application, or agent.

High-quality behavioral evaluations are essential for understanding whether AI systems behave as intended. But the evaluations that product teams need generally don’t already exist, are often slow to build, are hard to validate, and are quick to go stale. Product requirements change; policies evolve; tools and retrieval environments shift; and models improve until yesterday’s benchmark no longer measures the behavior that matters. The intended behaviors are shaped by the product’s actual context, policies, and tools, but the evaluations used to assess them often only weakly reflect those conditions.

The gap is most visible in application-specific behavior. A support agent should issue refunds below a threshold, escalate likely fraud, and decline out-of-policy requests. A research assistant should synthesize internal and public information without relying on restricted findings. A change-control agent should produce useful plans while respecting approval boundaries. Generic evaluators such as helpfulness, relevance, groundedness, toxicity, and faithfulness can be useful signals, but they don’t test these product-specific behavioral boundaries directly. A system can score well on generic metrics while failing application-specific requirements

ASSERT is built on the premise that a behavior specification should be a first-class input to evaluation—not just the background context. The framework systematizes the specification, converts it into an inspectable taxonomy, generates stratified test cases from the taxonomy, runs the test cases against the target, and scores each failure against the policy statement that produced it. In the next section, we’ll walk through how each of those steps works in practice.

The pipeline has four stages. First, ASSERT turns a broad behavior specification into an explicit concept specification, which is then converted into a granular, editable behavior taxonomy with suggested permissible and impermissible behaviors. Next, it generates stratified test cases over the dimensions the developer declares. Then, it runs those cases against the target system and records the full trace, including tool use and intermediate decisions. Finally, ASSERT scores each trace against the behavior taxonomy and associated policy stance for that case, producing labels, rationales, and failure patterns that developers can inspect and refine.

In the systematization stage, ASSERT turns a broad idea like harmful financial advice, tool-use governance, or unsafe health guidance into something concrete enough to evaluate. Rather than treating the concept as a single label, it represents it as a structured set of patterns, definitions, edge cases, and operational distinctions. Following Agarwal et al. (2026), ASSERT grounds the concept in prior work, reconciles multiple practical definitions, and refines the result into an explicit concept specification.

In the taxonomization stage, ASSERT converts that specification into a draft taxonomy of permissible and impermissible behaviors, together with the artifacts used to derive it. Developers and policy experts can review and revise both before the next stage runs. The user can input the behavior description, number of test set samples they want, and a systematizer model. The taxonomization step outputs an editable behavior taxonomy that can be validated by a policy expert.

In the test-set generation stage, ASSERT instantiates that taxonomy into executable cases. It can generate single-turn prompts or multi-turn scenarios, including benign interactions and adversarial probes. Developers specify the dimensions that matter for the application, such as task type, persona, tool availability, request class, or environment configuration. ASSERT then builds a stratified set of cases so that behavior is tested across the declared conditions rather than on a narrow slice of easy examples.

In the inference stage, ASSERT runs those cases against the target. The target can be a model, an agent, or an application-level workflow. Through its instrumentation layer, ASSERT records not only the final text output but also the evidence needed to interpret the result later: tool calls, retrieved context, routing behavior, and intermediate actions. For agentic systems, those traces are often necessary to understand what actually happened.

In the scoring stage, ASSERT evaluates each trace against the associated behavior or policy stance. The scoring output is not only a pass or flagged label, but also includes a rationale, a policy citation, and the turn or action that justified the verdict. The policy citation refers to the specific taxonomy behavior or developer-provided policy decision that the judge used to support the verdict.

We conducted two internal validation studies for ASSERT. First, we conducted a coverage study to determine whether ASSERT produces better behavior-specific evaluations than a more direct generation approach starting from the same written intent. Then, we evaluated the LLM judges against human review.

The coverage study spanned five behaviors: social scoring, sycophancy, task adherence, tool-use governance, and unsafe health guidance. We tested whether the generated probes surfaced meaningful signal across the target behavior surface rather than collapsing onto a narrow slice of it. Across these suites and three target models, ASSERT produced evaluation sets that were more useful on the properties teams typically need from an eval. Compared with a comparable in-house baseline, ASSERT covered roughly 1.2x as much of the intended behavior space, surfaced about 1.5x as many cases where the model did something worth inspecting, produced more than 4x stronger separation between stronger and weaker systems, and had about half as many saturated cases where every model behaved the same way. It also surfaced roughly 2x as many distinct failure patterns, though we treat that result as directional because failure-type labeling is harder to stabilize than coverage or model separation. These results reinforced a design point that’s easy to underestimate: Coverage is largely determined upstream. If the behavior is underspecified, the generated dataset will be, too. ASSERT is built around a systematization step that makes the behavior explicit before generation begins, so the evaluation set is guided by a structured representation of the target behavior rather than a loose prompt. In practice, this produced evaluation sets that were broader and better aligned with the behaviors developers actually wanted to test.

Second, we validated the judges directly against human review. Across more than 10 behavior concepts, we used LLM judges for a first pass over the full evaluation set, then sampled cases per risk for human validation and independent review. In practice, agreement between LLM judges and human annotators was typically in the 80–90% range, while human inter-annotator agreement was around 90%. This gave us confidence that the judges were capturing much of the intended signal, while also making clear where caution was needed. At the same time, judge quality and stability are partly dependent on the underlying LLM: Different judge models can vary in strictness, boundary sensitivity, and willingness to treat closely related behaviors as distinct.

Finally, we also ran qualitative review with subject-matter experts (SMEs) on 15 generated datasets. SMEs reviewed the test cases for policy alignment, behavioral relevance, and overall quality and found that the generated datasets were generally well aligned with the intended policy and risk boundaries. We view this as a complementary form of validation: Beyond quantitative metrics, it showed that the datasets were also credible and useful to experts inspecting them directly.

Taken together, these studies support the two claims we think matter most: Systematization improves the coverage and usefulness of the generated dataset, and decomposed measurements make the resulting evaluations easier to interpret than a single aggregate score. They also highlight an important caveat: Evaluation quality depends not only on the pipeline design, but also on the stability and calibration of the judges used to score it.

>“My favorite thing about ASSERT is that the eval is easy to configure and reason about. I describe the behavior I care about in YAML, point it at a real agent, and get artifacts back. Not just pass/fail. They show why the judge made each call. That openness matters. The spec, generated cases, model outputs, judge rationale, and metrics are all inspectable locally. The eval feels auditable, not like a black box.”

– Lorenze Jay, Open Source Lead, CrewAI

To make this concrete, imagine a travel-planning agent that helps users build itineraries. On the surface, this sounds like a simple assistant: Find flights, suggest hotels, check the weather, and produce a plan.

But a real travel agent has to do much more than answer a question. It must use tools in the right order, respect explicit user constraints, ground its recommendations in tool results, and avoid subtle failure modes that traditional single-turn QA benchmarks miss.

For example, the agent shouldn’t invent flight prices. It shouldn’t agree with an itinerary that exceeds the user’s budget. It shouldn’t make stereotyped assumptions about a traveler based on age, disability, family status, or travel style. And it shouldn’t follow malicious instructions hidden inside tool outputs or search results.

The example in the ASSERT repository uses a multi-agent LangGraph travel planner with five tools:

search_flightssearch_hotelscheck_weathercheck_travel_advisoriesvalidate_budgetIt operates in a six-turn budget, and every run records the full agent trace (tool calls, arguments, tool results, routing decisions, and intermediate state) alongside the final response. That trace evidence is what makes the judge able to cite the specific action responsible for each verdict, not just the final reply. That trace is important. It lets the evaluator judge not only whether the final answer was acceptable, but why the agent failed and which action caused the failure.

The full example lives in: examples/travel_planner_langgraph/

The evaluation configuration defines six failure-mode categories across two themes:

To run the evaluation: Copy

assert-eval run --config eval_config.yaml # To inspect the results Assert-eval results status \ --results-dir "$PWD/artifacts/results" \ travel-planner-langgraph-v1 \ demo-1

ASSERT produces a set of artifacts under the run directory:

taxonomy.json: the concept spec produced by systematizationtest_set.jsonl: the stratified prompts and multi-turn scenariosinference_set.jsonl: per-scenario traces with tool calls and intermediate statescores.jsonl: per-trace verdicts with rationale and policy citationmetrics.json: the aggregate roll-upExample results:

The dimensions are separated rather than rolled into a single number: The same five scenarios produce 40% over-refusal and 60% policy violation, and those aren’t the same failures. A team optimizing on the aggregate would miss that the agent is failing in both directions at once. The results can be further inspected in a UI widget as shown below:

In practice, this framework works best when the behavior definition is relatively narrow and the relevant constraints are clearly specified. Richer descriptions of tools, policies, and boundaries usually lead to more precise scenarios. It’s also worth treating aggregate scores cautiously. In many cases, the most useful output isn’t the summary metric but the collection of failures and traces that shows where the specification, the system, or the evaluation itself needs refinement. ASSERT doesn’t remove the need for judgment in evaluation design. Vague specifications still produce vague scenarios. Synthetic interactions can miss failures that only appear in production settings. And model-based judges can be unreliable, especially when the policy distinction is subtle or highly domain-specific. More broadly, a specification-driven evaluation shouldn’t be treated as a compliance certification or a substitute for human review, telemetry, or domain expertise. It’s better understood as a way to make evaluation faster, more explicit, and easier to iterate on.

ASSERT is open-source under the MIT license and available today.

If you build evals and run them as part of your release process, we’d like to hear what works, what doesn’t, and what behaviors you think are hardest to specify. ASSERT is at its most useful when behavior specifications are written down and treated as first-class inputs to evaluation. We’re releasing it in that spirit.

PM team: Mehrnoosh Sameki, Minsoo Thigpen, Chang Liu, Abby Palia, Hanna Kim

Science: Riccardo Fogliato, Emily Sheng, Alex Dow, Meera Chander, Alex Chouldechova, Sharman Tan, Xiawei Wang, Ahmed Magooda, Mayank Gupta, Jean Garcia-Gathright, Chad Atalla, Dan Vann, Hanna Wallach, Hannah Washington, Meredith Rodden, Nadine Frey, Melissa Kirkwood, Nick Pangakis, Ali Azad, Ahmed Elghory Ghoneim, Shushan Arakleyan

Eng team: Mohamed Elmergawi, Jake Present, Aaron Aspinwall, Yeming Tang

Design: Sooyeon Hwang, Becky Haruyama

Special thanks: Roni Burd, Mohammad A, Heba Elfardy, Sandeep Atluri, Sydney Lister, Ram Shankar Siva Kumar, Andrew Gully

The post Turn specs into evals for any agent with ASSERT appeared first on Microsoft Security Blog.

Ever watched GitHub Copilot CLI extract a JAR file to a temporary directory, grep through .class files, and piece together an API signature from raw bytecode? The agent is resourceful, but without a language server, that’s the best it can do.

The Language Server Protocol (LSP) is the standard that powers go to definition, find references, and type resolution in editors like VS Code. It works just as well in the terminal. The LSP Setup skill automates the installation and configuration of LSP servers for Copilot CLI, so the agent gets precise, structured answers about your code instead of relying on text search heuristics.

In this post, you’ll learn how the skill works under the hood, see the configuration format it generates, and get set up for any of the 14 languages it supports today.

Without an LSP server, the agent in GitHub Copilot CLI reverse-engineers API information through text search and binary extraction. For a Java project, that might look like:

# Find the dependency JAR

find ~/.m2/repository -name "*httpclient*.jar"

# Extract it to a temp directory

mkdir /tmp/httpclient && cd /tmp/httpclient

jar xf ~/.m2/repository/org/apache/httpcomponents/httpclient/4.5.14/httpclient-4.5.14.jar

# Search extracted class files for a method

grep -r "execute" --include="*.class" .For Python, the agent might cat files inside site-packages. For TypeScript, it walks node_modules. These text-based approaches work for simple cases, but they’re doing pattern-matching over raw text rather than true semantic analysis, so they miss generics, overloads, and transitive types, and can’t see compiled bytecode at all. That’s exactly the gap a language server close.

An LSP server solves this structurally. When the agent sends a textDocument/definition request for a symbol, the language server returns the exact source location, fully resolved type, and signature.

When triggered, the skill executes a seven-step workflow:

The agent uses ask_user with a set of choices to determine which language the user needs LSP support for. This drives all subsequent steps.

The agent runs uname -s (or checks $env:OS / %OS% on Windows) to determine the target platform. Install commands vary by operating system. For example, brew install jdtls on macOS versus downloading from eclipse.org on Linux.

The skill includes a reference file (references/lsp-servers.md) with curated data for 14 languages: install commands per operating system, binary names, and ready-to-use config snippets. The agent reads this file and selects the matching entry.

The agent asks whether the config should be:

~/.copilot/lsp-config.json—applies to all repositorieslsp.json at the repository root or .github/lsp.json—scoped to a single projectRepository-level configuration takes precedence when both exist.

The agent runs the appropriate install command. For example:

# TypeScript on any OS

npm install -g typescript typescript-language-server

# Java on macOS

brew install jdtls

# Rust on any OS

rustup component add rust-analyzerThe agent writes or merges an entry into the chosen config file. The format uses a lspServers object where each key is a server identifier:

{

"lspServers": {

"java": {

"command": "jdtls",

"args": [],

"fileExtensions": {

".java": "java"

}

}

}

} Key rules the skill enforces:

command must be on $PATH or an absolute pathargs typically includes "--stdio" for standard I/O transport (some servers like jdtls handle this internally)fileExtensions maps each extension (with leading dot) to a language identifierThe agent runs which <binary> (or where.exe on Windows) to confirm the server is accessible, then validates the config file is well-formed JSON.

The skill comes with a set of predefined language servers for several programming languages. If the coding agent faces one that it is not mapped out already, it will search for an appropriate server and walk you through manual configuration.

Once an LSP server is configured, the CLI agent can:

node_modulesThis means the agent spends less time on tool calls and produces more accurate code on the first pass. For you, that’s less time waiting while the agent decompiles a JAR file or greps through node_modules to answer a question your IDE already knows, and fewer wrong turns built on a misread signature. The agent reasons about your code with the same structured understanding you get from go-to-definition in your editor, so you can hand it bigger, gnarlier tasks and trust the result.

~/.copilot/skills/ by running:unzip lsp-setup.zip -d ~/.copilot/skills//exit first. Then relaunch copilot so it picks up the new skill./exit, then relaunch), run /lsp to check the server status, and try go-to-definition on a symbol from one of your dependencies.The skill is part of the Awesome Copilot project. It’s open source, so contributions and feedback are welcome!

The post Give GitHub Copilot CLI real code intelligence with language servers appeared first on The GitHub Blog.