Read more of this story at Slashdot.

AI companies have been hell-bent on creating coding agents that use computers like humans: Clicking buttons, scrolling through pages, and moving cursors around desktops. The promise is obvious, but the execution remains clunky.

The goal is to enable agents to operate software the same way people do, especially inside web apps and enterprise tools that lack clean APIs or integrations.

However, those systems can still feel cumbersome, often monopolizing browser sessions and working through tasks one screen at a time. That’s the problem OpenAI is now trying to tackle with a new Chrome extension for Codex.

Introduced on Thursday, the Codex Chrome extension lets agents operate directly inside a user’s live browser session, giving them access to signed-in websites, multiple tabs, and authenticated workflows without fully taking over the desktop.

The extension connects Chrome to the Codex app on Windows and macOS, allowing agents to interact with tools such as Gmail, Salesforce, LinkedIn, and internal web apps using the user’s existing browser state, cookies, and logged-in sessions.

Beyond screenshot-and-click agents

The launch builds on OpenAI’s “computer use” capabilities introduced in Codex in April. That allowed agents to operate desktop apps and browsers in the background while users continued working elsewhere on their machines.

However, OpenAI is now drawing a clearer distinction between generalized computer-use systems and a more browser-focused approach.

“OpenAI is drawing a clearer distinction between generalized computer-use systems and a more browser-focused approach.”

Previously, Codex largely relied on either structured plugins or broader computer-use tooling when interacting with browser workflows. Plugins remained the preferred route because they allowed Codex to work directly with services such as Slack, Gmail, and GitHub without manually navigating their interfaces.

But many workflows still live inside full web applications, internal dashboards, or authenticated browser sessions that agents cannot easily access through integrations alone.

In a demo video accompanying the launch, OpenAI developer experience lead Dominik Kundel said the new extension avoids the traditional “screenshot, reason, move the mouse” loop common in many computer-use systems, where agents repeatedly analyze what’s visible on-screen before deciding where to click next.

While Codex could already operate Chrome through OpenAI’s existing computer-use functionality, it effectively treated the browser like any other desktop application, interacting with it visually one step at a time. The new extension instead connects Codex directly into Chrome itself, allowing it to work across multiple tabs, logged-in sessions, and browser tasks in parallel.

That difference matters because much of modern software work increasingly happens inside browser-based SaaS tools, internal dashboards, and authenticated enterprise apps that often lack clean APIs or structured integrations.

“Sometimes there is no plugin, or there is one, but the thing you need is only available in the full web app,” Kundel says. “And sometimes the context is actually the existing logged-in Chrome session. This is what the Chrome extension is for.”

“Sometimes the context is actually the existing logged in Chrome session. This is what the Chrome extension is for.”

Chrome and Codex come together

The Chrome extension is installed through the Codex app itself. Users first open Codex, navigate to the Plugins section, and add the Chrome plugin, which then guides them through connecting Chrome and approving the required browser permissions.

Once installed, Codex can invoke Chrome directly from prompts such as: “@Chrome open Salesforce and update the account from these call notes,” or “summarize the feedback and sentiment from community forum comments.”

While OpenAI says Codex’s existing in-app browser remains better suited to localhost testing and frontend development tasks, the Chrome extension is designed for workflows that depend on a user’s live browser context and the full capabilities of Chrome itself. In the demo, Kundel showed Codex researching sentiment around product launches across multiple tabs simultaneously, identifying recurring feedback and pain points before compiling the results into a spreadsheet.

The extension is designed to sit between Codex’s structured plugins and its broader computer-use tooling. OpenAI says Codex can switch dynamically between integrations, Chrome, and its own in-app browser depending on the workflow, using direct plugins where possible before falling back to browser interaction when tasks require authenticated sessions or full web interfaces.

One of the key aspects of the extension is that it doesn’t commandeer the user’s active browsing session; instead, it groups Codex activity into its own isolated Chrome tabs. That allows agents to continue researching, navigating, and compiling information in the background while users keep working elsewhere in the browser.

This deeper browser integration also requires more permissions than a typical chatbot interaction.

According to OpenAI’s documentation, the extension may request access to browsing history, tab groups, downloads, bookmarks, website data, debugger functionality, and communication with native applications.

The company says Codex asks for confirmation before interacting with new websites unless users explicitly disable those prompts. It also notes that browser tasks can expose sensitive context because page content, authenticated sessions, and browsing activity may become part of the information Codex uses while completing tasks.

Access all areas

The launch lands amid a wider push toward browser-native agents across the AI industry — and increasingly, the browser session itself is emerging as the key battleground.

Anthropic has been moving in the same direction. Its Claude Chrome extension, which has been in beta since August, gives Claude Code the same ability to operate inside a user’s existing browser session — accessing authenticated apps, working across tabs, and handling workflows that lack clean API integrations. The company also expanded Claude Code and Claude Cowork with broader computer-use capabilities on macOS earlier this year. Meanwhile, French AI startup HCompany recently launched HoloTab, a browser agent that navigates websites in Chrome without requiring site-specific integrations.

And so a clear pattern emerges: agents moving closer to where work actually happens. Not operating computers from the outside in, but working inside the applications, the sessions, the contexts where modern work already lives.

The post OpenAI Codex arrives in the browser with new Chrome extension appeared first on The New Stack.

- Beta (Including Beta Channel): Build 26220.8370

- Experimental (Including former Dev Channel): Build 26300.8376

- Experimental (26H1) – Including former Canary 28000 series: Build 28020.2075

- Experimental (Future Platforms) – Including Canary 29500 series: Build 29585.1000

Notable new features:

[Touchpad]

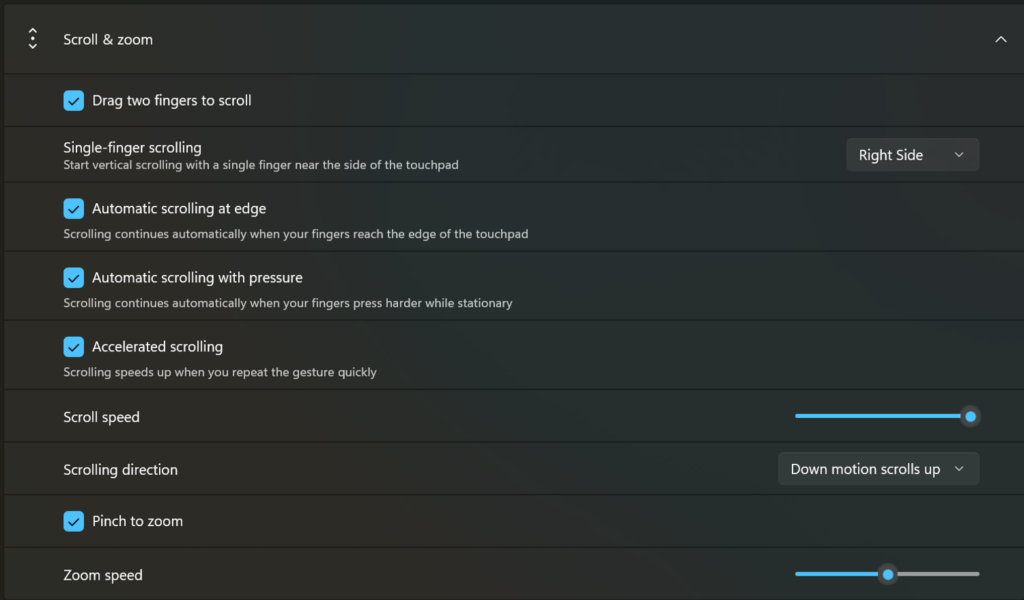

Release channel: Experimental We’re adding new gesturing-related functionality to precision touchpads in Settings. The new features should be widely available across applications, with the exception that WinUI3-based UI requires new WinAppSDK versions for complete functionality - we're in the process of bringing the necessary changes to versions 1.8 and 2.0.- Scroll / zoom speed: control the baseline speed for these gestures

- Automatic scrolling: scrolling continues indefinitely without lifting your fingers. Activate by either bringing your fingers near the edge of the touchpad while scrolling, or holding them still and pressing harder (requires hardware support).

- Accelerated scrolling: repeatedly scrolling increases their speed, allowing quick traversal of long documents.

- Single-finger scrolling: perform a vertical scroll with a single finger starting from the left or right side of the touchpad.

Touchpad improvements bring new gesture capabilities including automatic scrolling, gesture speed controls, accelerated scrolling, and optional single-finger scrolling support.[/caption]

Touchpad improvements bring new gesture capabilities including automatic scrolling, gesture speed controls, accelerated scrolling, and optional single-finger scrolling support.[/caption]

[EDU Licensing]

Release channel: Experimental Beta Channel Free upgrade path to Windows 11 Pro Education for K-12 Windows Insiders in K–12 education environments can now experience a seamless upgrade path from Windows 11 Home to Windows 11 Pro Education edition—at no additional cost. This enables educational organizations to procure Windows 11 Home devices, upgrade them to Windows 11 Pro Education, and bring devices under school management. See release notes for more information. Thanks, Stephen and the Windows Insider Program teamFeature: mode handler APIs for plan approval and rate-limit recovery

Applications can now register callbacks for exitPlanMode.request and autoModeSwitch.request from the Copilot runtime, giving full control over plan-mode transitions and automatic model switching after rate-limit events. (#1228)

const session = await client.createSession({

onExitPlanMode: async (request) => ({ approved: true }),

onAutoModeSwitch: (request) => "yes",

});var session = await client.CreateSessionAsync(new SessionConfig

{

OnExitPlanMode = (request, _) => Task.FromResult(new ExitPlanModeResult { Approved = true }),

OnAutoModeSwitch = (request, _) => Task.FromResult(AutoModeSwitchResponse.Yes),

});- Python:

on_exit_plan_mode/on_auto_mode_switchkwargs oncreate_session() - Go:

ExitPlanModeHandler/AutoModeSwitchHandlerfields onSessionConfig

Feature: SDK tracing diagnostics

The .NET, Python, and Rust SDKs now emit structured diagnostic logs covering CLI startup, TCP connection, JSON-RPC request timing, session lifecycle, and error paths. (#1217)

var client = new CopilotClient(new CopilotClientOptions

{

Logger = loggerFactory.CreateLogger<CopilotClient>(),

});Python emits logs via stdlib logging under copilot.* loggers at DEBUG level. Rust uses tracing structured fields; wire up a tracing_subscriber as usual.

Feature: enableSessionTelemetry session option

A new enableSessionTelemetry option on SessionConfig and ResumeSessionConfig lets applications explicitly enable or disable the runtime's internal session telemetry. (#1224)

const session = await client.createSession({ enableSessionTelemetry: true });var session = await client.CreateSessionAsync(new SessionConfig { EnableSessionTelemetry = true });Other changes

- bugfix: [C#] session-event enums are now string-backed readonly structs, preventing deserialization failures when the runtime adds new enum values (#1226)

- bugfix: [Rust] binary tool result resource blobs now default to

application/octet-streamwhenmimeTypeis absent (#1222)

New contributors

@sunbryemade their first contribution in #1208@cschleidenmade their first contribution in #1222@IeuanWalkermade their first contribution in #1232

Generated by Release Changelog Generator · ● 225.2K

Generated by Release Changelog Generator · ● 1.3M

- Fix SDK documentation typos

Co-authored-by: Copilot 223556219+Copilot@users.noreply.github.com

- Preserve embedded CLI verbose log output

Co-authored-by: Copilot 223556219+Copilot@users.noreply.github.com

Co-authored-by: Copilot 223556219+Copilot@users.noreply.github.com