Introduction

As organizations move from AI-assisted applications to agentic workflows, MCP servers are becoming a critical integration layer between agents, tools, APIs, data sources, and enterprise systems. Azure API Management already helps teams bring MCP servers under enterprise governance. But as MCP adoption scales, platform teams need more than basic exposure. They need a way to package MCP servers for the right consumers, understand tool usage in detail, manage changes safely, and automate configuration across environments.

These are familiar API management challenges — and the same patterns that organizations already use for APIs can now be applied more deeply to MCP servers. We are excited to announce new generally available capabilities for MCP server management in Azure API Management:

- Add MCP servers to products to package and govern MCP capabilities for specific consumers

- MCP tool observability to trace tool usage, logs, errors, and payload context

- MCP server versioning to run multiple versions side by side and manage change safely

- Management API and Bicep support to automate MCP server configuration as part of CI/CD workflows

Together, these capabilities extend MCP server management in Azure API Management and help make MCP servers first-class managed resources — productized, observable, versionable, and automatable.

Why MCP server management matters

MCP gives agents a standard way to connect with tools and external capabilities. That standardization is powerful, but it also introduces a new operational surface for enterprises.

Without a management layer, teams can quickly run into questions such as:

- Which MCP servers are approved for use?

- Who can access each server?

- How do we expose MCP servers to different developer or agent audiences?

- How do we monitor tool calls, latency, errors, and cost?

- How do we run preview and production versions side by side?

- How do we automate MCP server configuration across environments?

These are not just developer experience questions. They are enterprise governance questions. With Azure API Management, MCP servers can now be managed using the same core patterns organizations already use for APIs: products, subscriptions, policies, observability, versioning, and automation.

What’s new

1. Add MCP servers to products

![]()

Azure API Management products are a proven way to package APIs for consumption. With this release, you can now add one or more MCP servers to APIM products as well. This makes it easier to expose MCP capabilities to specific consumers, teams, applications, or agent experiences using familiar product-based governance.

For example, a platform team can create a product for internal agents that includes approved MCP servers such as:

- Customer profile lookup

- Order status retrieval

- Knowledge base search

- Ticket creation

- Workflow automation tools

By adding MCP servers to products, teams can use familiar controls such as subscriptions, quotas, approval workflows, and access management to govern how MCP capabilities are consumed.

Why it matters: MCP servers are no longer isolated endpoints. They can be bundled, governed, and delivered as secure, consumable products.

2. MCP tool observability

![]()

As agents use MCP servers to discover and invoke tools, teams need more than basic traffic visibility. They need end-to-end trace context for each agent-to-tool interaction. With MCP observability in Azure API Management, teams can inspect key MCP-specific details, including:

- Operation context: whether the request was a tools/list or tools/call operation

- Session context: the MCP session ID through gen_ai.conversation.id

- Client context: MCP client name and version

- Protocol context: MCP protocol name and version

- Server context: MCP server name and version

- Access context: authentication type and API type

- Tool context: tool name and tool type for tool invocation traces

- Error context: error type and error message when a call fails

- Payload context: tool invocation arguments and results when payload logging is enabled

This is especially important for agentic workflows, where a single user request may trigger multiple tool calls across different systems. With APIM, MCP traffic can be traced, inspected, and monitored using the same operational practices teams already use across their API estate.

Why it matters: MCP servers are not just accessible through APIM — they are observable. Platform teams can trace tool calls, inspect errors, and understand MCP usage with the same operational discipline they expect from managed APIs.

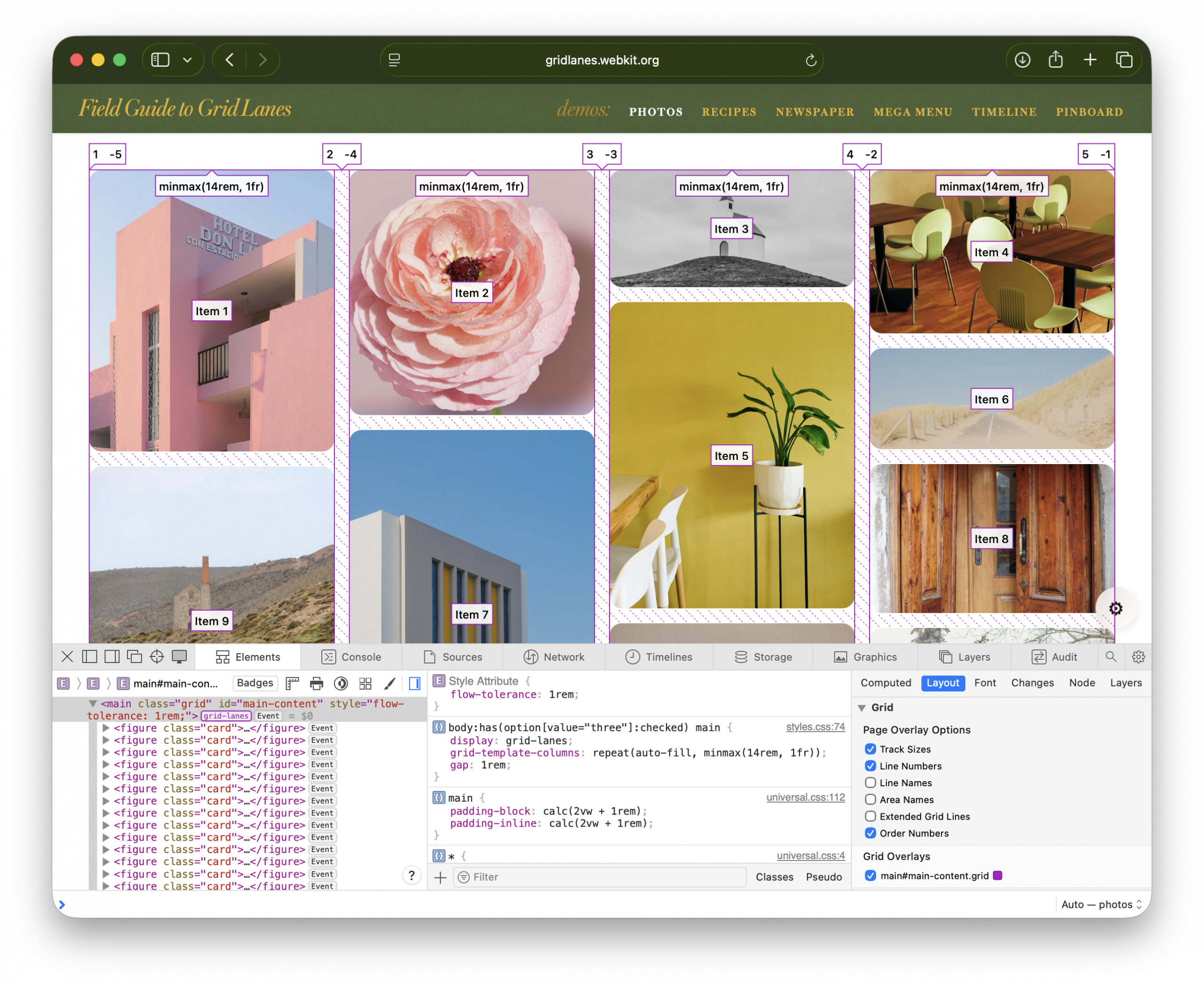

3. Expose multiple MCP versions

![]()

Enterprise teams need safe ways to evolve MCP servers over time. With MCP server versioning in Azure API Management, you can expose multiple versions of the same MCP server side by side. This allows teams to run a stable GA version while introducing a preview or next version for early adopters.

For example:

- v1 can serve the majority of production traffic.

- v2 can be exposed to a subset of consumers for testing.

- Teams can monitor adoption, errors, latency, and behavior.

- Once the new version is validated, v2 can be promoted with confidence.

This pattern is especially useful when MCP tools evolve, schemas change, new capabilities are added, or teams want to validate agent behavior before rolling changes out broadly.

Why it matters: MCP servers can now follow a safer lifecycle model: preview, validate, route, promote, and retire.

4. Management API and Infrastructure as Code

![]()

MCP server management also needs to work at enterprise scale. With Management API and Infrastructure as Code support, teams can provision and configure MCP servers programmatically through Azure API Management APIs and automation pipelines. This allows platform teams to define MCP server resources as part of repeatable deployment workflows using tools such as Bicep, Terraform, ARM, REST APIs, and CI/CD pipelines.

Teams can automate configuration for:

- MCP server endpoints

- Runtime and transport settings

- Authentication configuration

- Metadata and ownership

- Versioning

- Product association

- Policies

- Environment promotion

This is critical for organizations that need consistent MCP governance across development, test, staging, and production environments.

Why it matters: MCP server management can now be automated, reviewed, deployed, and governed like the rest of your API platform.

How these capabilities work together

Individually, each capability solves an important operational need. Together, they create a complete management model for MCP servers in Azure API Management. A platform team can:

- Register or expose MCP servers through Azure API Management.

- Package them into products for specific consumers.

- Apply access controls, subscriptions, quotas, and policies.

- Observe tool-level usage, latency, errors, traces, and cost.

- Run multiple versions side by side.

- Promote changes safely.

- Automate deployment through APIs and Infrastructure as Code.

This brings the full API management playbook to MCP. Instead of treating MCP servers as unmanaged agent extensions, organizations can operate them as governed enterprise resources.

Example scenario

Imagine a company building internal copilots for customer support, sales, and operations. Each copilot needs access to different tools:

- Customer lookup

- Order history

- Case management

- Knowledge search

- Refund workflows

- Escalation workflows

With MCP and Azure API Management, the platform team can expose these capabilities as MCP servers and organize them into products. The customer support copilot can subscribe to the support product. The sales copilot can subscribe to the sales product. Early adopters can be routed to a preview version of a tool. Operations teams can monitor usage, errors, latency, traces, and cost. Platform teams can automate the entire setup across environments. The result is a more governed and scalable way to bring MCP-based tools into enterprise agent workflows.

Getting started

To get started with MCP server management in Azure API Management:

- Create or identify an MCP server you want to expose through Azure API Management.

- Add the MCP server as a managed resource in APIM.

- Add the MCP server to an APIM product.

- Configure access, subscriptions, quotas, and approval workflows.

- Enable observability to monitor tool-level usage and traces.

- Use versioning to manage preview and production versions.

- Use the Management API or Infrastructure as Code to automate configuration.

Conclusion

MCP is quickly becoming an important standard for connecting agents to tools and enterprise capabilities. But for MCP to succeed in production, organizations need more than connectivity. They need governance, lifecycle management, observability, and automation. With these new MCP server management capabilities in Azure API Management, platform teams can manage MCP servers using the same trusted patterns they already use for APIs.

MCP servers are now first-class APIM resources — productized, observable, versionable, and automatable. We are excited to see how customers use these capabilities to build the next generation of governed, enterprise-ready agentic applications.